Table of Contents

Google Home and API.AI Integration

Author: Dylan Wallace Email: wallad3@unlv.nevada.edu

Date: Last modified on 01/03/17

Keywords: Smart Home, API.AI, API, SDK, Android, Java

This tutorial is the third part of a series of tutorials on Smart Home development. If you have not yet performed the Philips Hue Control Tutorial, please do this first, as this tutorial builds on the knowledge of the previous tutorial. This tutorial serves as a starting point for API.AI development using Android. API.AI development can also be done with iOS, Web, and many third-party SDKs, but we will not cover these in this tutorial. For more info on those, see the API.AI SDKs website.

Overview

API.AI is a cloud-based interface that allows developers to create dialogs between their users, and to implement Natural Language Understanding (NLU) and Machine Leanring (ML) into their applications. The API.AI framework is based on a web GUI that allows developers to create custom Intents, Entities, Actions, and Integrations for their Agents. API.AI allows integration with many platforms such as Facebook Messenger, Amazon Alexa, Google Home, and even Microsoft Cortana. It is a very powerful tool for building interactive chat bots and agents that make the user-experience even more seamless for your app.

Agents are the individual conversation or command packages that have their unique set of intents, entities, and actions. An example of an a agent is a chatbot for finding new recipes for common foods. The Agent is really the assistant as a whole, and API.AI allows you to manage multiple agents with their web GUI. For more info on Agents, visit the API.AI Documentation.

Intents in API.AI are meanings that are mapped to a user's speech/text input. If a user asked “Ok Google, what the weather like?”, the corresponding intent would be get_weather_info or something along those lines. Intents are simply a way for developers to translate user input (speech or text) into actionable data. For more info on Intents, visit the API.AI Documentation.

Entities are data fields that are to-be-filled by user input. So let's take the example of an AI assistant that finds clothes based on the parameters that the user sets. The entities for an app like this would be things like clothing_type, color, size, etc. When a user says “Ok Google, find me red shirts in large, API.AI would map the value “red” to color, “shirt” to clothing_type, and “large” to size. This is a process that is called slot-filling, which fills the entities that are needed for an action with the necessarry data. For more info on Entities, visit the API.AI Documentation.

Finally, Actions are what the agent executes when an intent is triggered. This can be done with either webhooks, SDK integration, speech response, or even a combination of the 3. This gives the developer a lot of flexibility when creating dialogs and actions with their users. Actions have parameters, which are entities that are used for an action. You can choose to require certain parameters for actions, and the agent will prompt the user to fill these if they are not already, which ensures that everything goes smoothly during conversation. For more info on Actions and parameters, visit the API.AI Documentation.

Downloads

To implement API.AI into an Android App, we simply need to download the Android SDK, hosted on GitHub, and import it into Android Studio. If you have not yet setup Android Studio, please go follow the Nest Control Tutorial for more info on this. Please do this before proceeding with development.

Creating an API.AI Agent

First, you will need to register for an API.AI account. This is most preferably done with an existing developer google account. You can do this on the API.AI website.

Agent

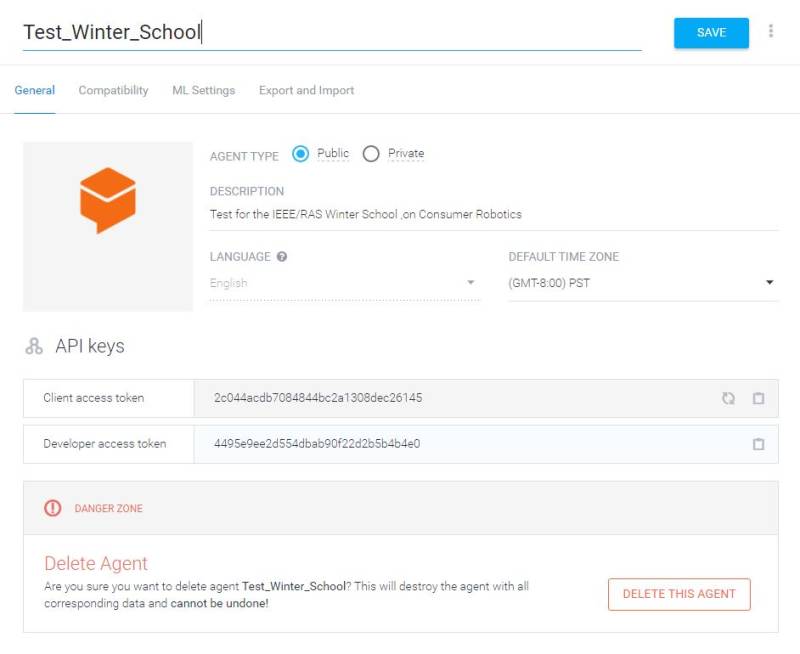

Once you have created your account, create a new agent using the top-most left tab on the Web GUI. Fill in the details as you please. Now, we have an agent to work with. For this tutorial, we will use the example of an agent that sets the wake-up time for a given date. This has the possibility to integrate with things such as the Nest API and Hue API to control lighting and temperature for wake-up scenarios. Here is a picture of what your Agent should end up looking like after you create it:

As you can see, API.AI has generated both a client and developer token for use when developing your own API.AI apps. We will use these later when integrating the SDK on Android.

Entities

The first step for customizing your agent is adding entities to store any data that you may gain from the users' requests. For our purposes, we will only use the built-in system entities. There are many built-in system entities for common data types such as location, date, time, etc. Since all we need is date and time, we don't need to create any custom entities. However, for tutorial purposes we can add an entity that will be the mood we want to be woken up to. Here is a picture of what that looks like:

As you can see, we can define multiple moods that the user may be in, and even synonyms for these moods. We will provide some of the basic ones that we can think of, and then check “Allow Automated Expansion” so that users can give other moods that we have not yet defined.

Intents

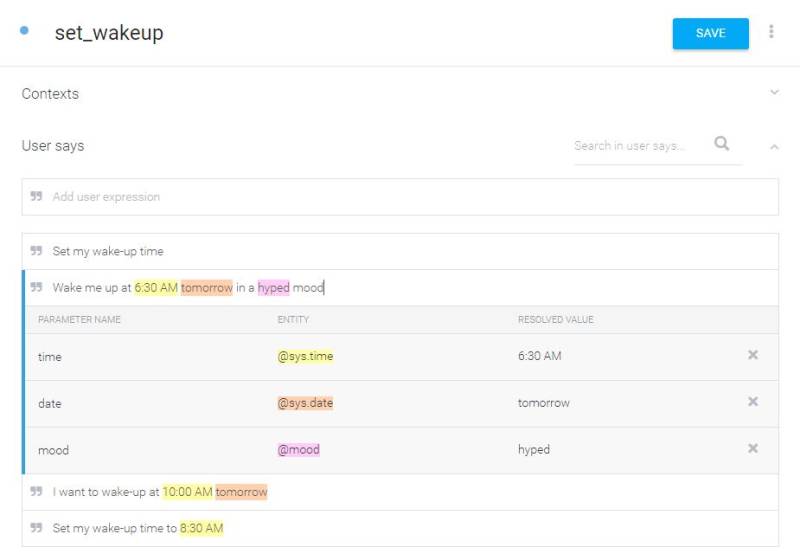

The next step is creating intents for your users' commands. We will name our intent set_wakeup. To start, enter some phrases that you would imagine a user saying to trigger this intent. Try and be variable with your commands, and include examples that leave out parameters as well. Here is what that might end up looking like:

As you can see, API.AI has automatically mapped some of the words in the commands to system entities that we will use. This is done automatically here, but you can also do it manually for your own entities by highlighting the word that you want to fill an entity, and selecting the appropriate entity from the drop-down menu.

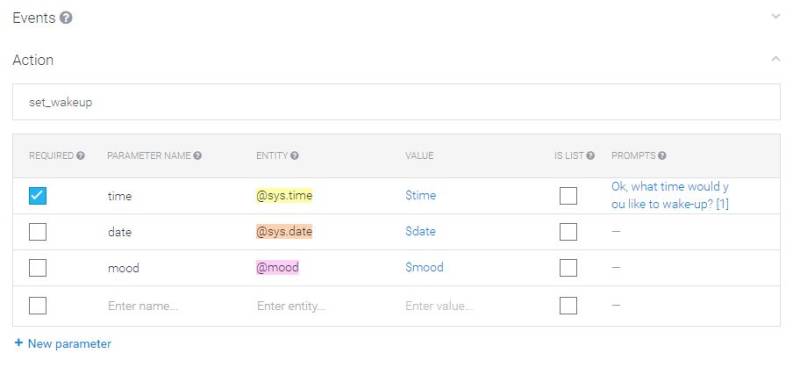

Below our User Says section, we have the action that will be performed. We will define the name for this action as set_wakeup (this does not need to be the same as our intent, but it can be). Since we had parameters auto-highlighted, they were also added to this action. We can choose which ones we want as required. If they are not given in the user command, then we can define prompts for the agent to query the user for them. This is done under the prompts section, to the right of the parameter table.

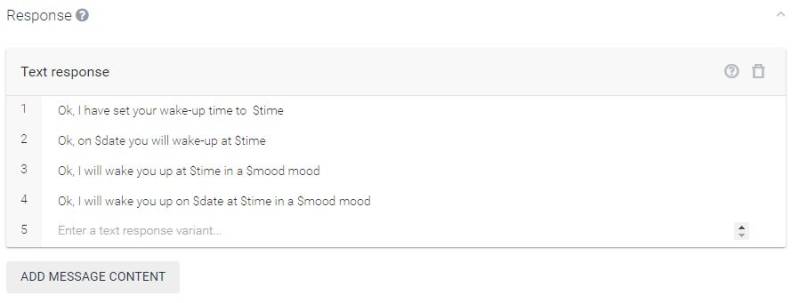

Finally, we need to define the speech response for our agent. We can add parameters to fill the response by using the $parameter format, and we can also add multiple responses that will happen depending on what parameters are filled. As you can see, we want to vary our responses for a wide combination of optional parameters.

Note that there is a section at the top of the intent called Contexts. This is an area where we can make more advanced conversations by defining input and output contexts for intents. For this tutorial's purposes, we will not uses these, but you can read more about them here.

Once we have fully defined our intent, we are ready to deploy to our Andriod SDK.

Setting up Android Studio Project

For this tutorial we will use the sample app to interface with our custom agent. First, we need to setup the SDK in Android Studio. To do this, follow the same steps outlined in the Nest Control Tutorial. Do not worry about the access tokens part, as we have a different authentication procedure for the API.AI SDK.

Once you have imported the project, do a clean build to ensure no errors occur. If they do, fix them by installing the necessary libraries/platforms for the SDK.

Finally, in order to interface our agent that we created, with the SDK, we need to provide the Client Access Token which can be found under the Agent Settings. Copy this token and add it to the ACCESS_TOKEN field of the Config.java file. Save your changes and rebuild the project. If you are without errors, then we should be ready to deploy.

Deployment

For deployment, we will follow the same steps as outlined in the Nest Control Tutorial. Make sure that both Developer mode and USB Debugging are enabled in order for the app to deploy. Install the app, and open it up for testing.

Testing

In order to test, simply use the Button Sample on the app. This will take in the speech, send it to the Google's NLU service, and then back to API.AI for processing through your intents. Then, the agent will return the speech response to the phone, and also will display the returned JSON text from the response. This JSON text will allow us to utilize a custom app to act on intents. This could allow us to integrate things such as the Nest API and Hue API into our app to develop a Smart Home Integration App.

For questions, clarifications, etc, Email: wallad3@unlv.nevada.edu